Nvidia cuda toolkit 10.0

- #Nvidia cuda toolkit 10.0 install#

- #Nvidia cuda toolkit 10.0 update#

- #Nvidia cuda toolkit 10.0 software#

- #Nvidia cuda toolkit 10.0 code#

- #Nvidia cuda toolkit 10.0 series#

#Nvidia cuda toolkit 10.0 software#

The CUDA platform is accessible to software developers through CUDA-accelerated libraries, compiler directives such as OpenACC, and extensions to industry-standard programming languages including C, C++ and Fortran. Copy the resulting data from GPU memory to main memory.GPU's CUDA cores execute the kernel in parallel.

#Nvidia cuda toolkit 10.0 code#

CUDA-powered GPUs also support programming frameworks such as OpenMP, OpenACC and OpenCL and HIP by compiling such code to CUDA.ĬUDA was created by Nvidia. This accessibility makes it easier for specialists in parallel programming to use GPU resources, in contrast to prior APIs like Direct3D and OpenGL, which required advanced skills in graphics programming. ĬUDA is designed to work with programming languages such as C, C++, and Fortran. CUDA is a software layer that gives direct access to the GPU's virtual instruction set and parallel computational elements, for the execution of compute kernels.

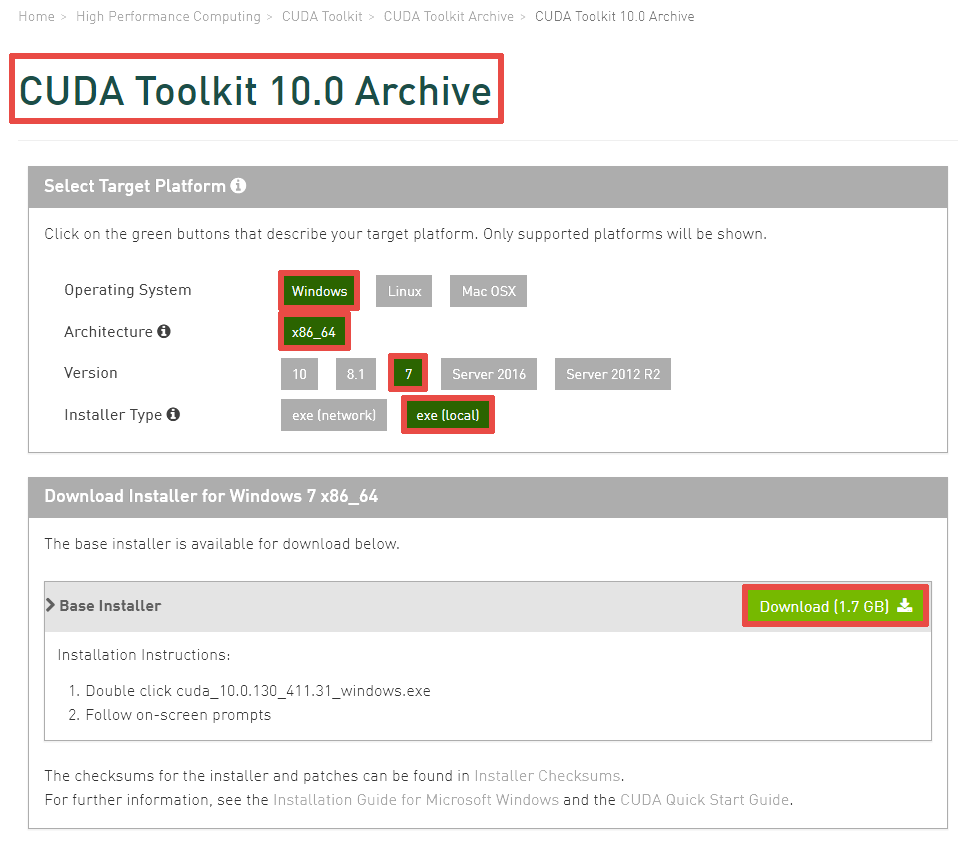

#Nvidia cuda toolkit 10.0 install#

sudo apt-get -o dpkg::Options::="-force-overwrite" install -fix-broken Verifying the installationĮxecuting dpkg -l | grep cuda will display an output similar to the one shown below, which verifies that the installation is complete.CUDA (or Compute Unified Device Architecture) is a parallel computing platform and application programming interface (API) that allows software to use certain types of graphics processing units (GPUs) for general purpose processing, an approach called general-purpose computing on GPUs ( GPGPU). If so, execute the following command to force the installation and resume the installation. In some installations, the sudo apt-get -y install cuda command will return an error stating that some dependencies cannot be installed.

#Nvidia cuda toolkit 10.0 update#

Installing from Debian repositoriesīefore installing CUDA on your Jetson Nano, make sure that you have completed the pre-install requisites found in this guide to ensure a smooth and hassle-free installation.Īfter completing the pre-install, execute the following commands to install the CUDA toolkit: wget sudo mv cuda-ubuntu1804.pin /etc/apt/preferences.d/cuda-repository-pin-600 wget sudo dpkg -i cuda-repo-ubuntu-local_11.0.2-450.51.05-1_b sudo apt-key add /var/cuda-repo-ubuntu-local/7fa2af80.pub sudo apt-get update sudo apt-get -y install cuda When installing the JetPack SDK from the Nvidia SDK Manager, CUDA and its supporting libraries such as cuDNN, cuda-toolkit are automatically installed, and will be ready-to-use after the installation so it will not be necessary to install anything extra to get started with CUDA libraries. While installing from the CUDA repositories allow us to install the latest and greatest version to the date, the wise option would be to stick with either the JetPack SDK or the Debian repositories, where the most stable version of the framework is distributed.

To install CUDA toolkit on Jetson Nano (or any other Jetson board), there are two main methods: The flow diagram below indicates the typical program flow when executing a GPU-accelerated: When correctly installed, the CPU can invoke the CUDA functions on the GPU through CUDA framework and thus enables the parallel computing possibility. The framework supports highly popular machine learning frameworks such as Tensorflow, Caffe2, CNTK, Databricks, H2O.ai, Keras, MXNet, PyTorch, Theano, and Torch. CUDA is written primarily in C/C++ and there exist additional support for languages like Python and Fortran.

Nvidia calls this special framework that enables parallel computing on the GPU the CUDA ( Compute Unified Device Architecture). However, since the Jetson Nano is designed with special hardware, in order to make the best use of the hardware-accelerated parallel computing using the GPU, a special framework needs to be installed and thereby, machine learning programs can be written using the same.

#Nvidia cuda toolkit 10.0 series#

In terms of parallel processing, the Jetson Nano easily outperforms the Raspberry Pi series and pretty much any other Single Board Computers which typically only consist of a CPU with one or more cores and lacks a dedicated GPU. The Jetson nano can be used as a general purpose Linux-powered computer, which has advanced uses in machine learning inference and image processing, thanks to its GPU accelerated processor.